tech

March 19, 2026

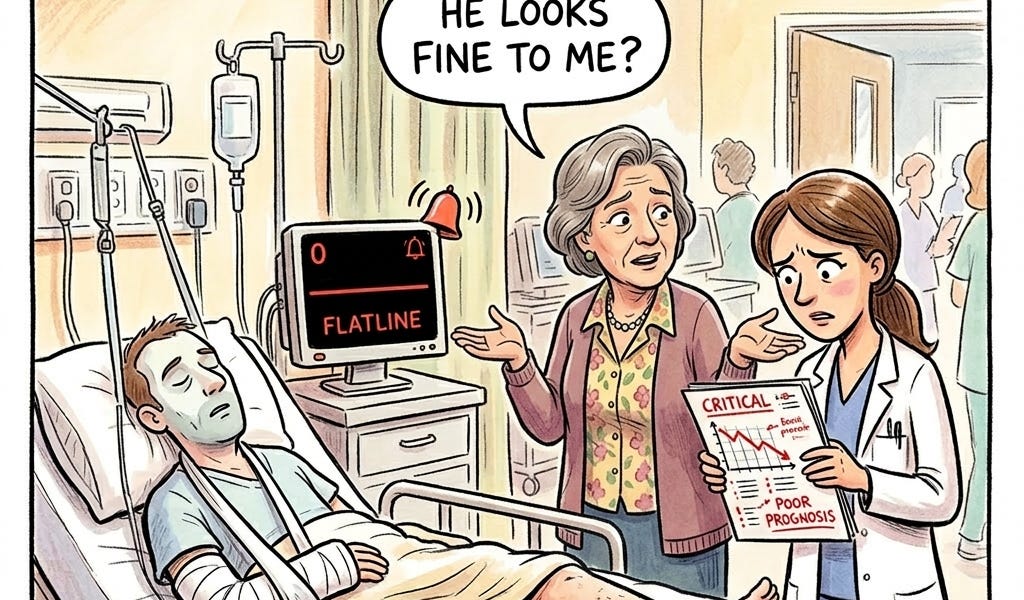

A Single Sentence from a Family Member Shifted an AI Diagnosis 12x. That Anchoring Bias Is in Your Agents Right Now.

OpenAI’s ChatGPT Health was built in collaboration with more than 260 physicians, shaped by over 600,000 rounds of clinician feedback, and backed by a custom safety framework designed to prioritize safety in moments that matter. It just failed its first independent evaluation. Badly.

TL;DR

- OpenAI's ChatGPT Health failed its first independent evaluation.

- The AI misdirected patients away from emergency care 52% of the time in critical cases.

- Suicide-crisis safeguards were more sensitive to vague distress than specific plans.

- A single sentence from a family member had a significant impact (odds ratio of 11.7) on triage recommendations.

- The failures are attributed to structural failure modes common in Large Language Models (LLMs) used in production.

- A new factorial evaluation methodology and a four-layer eval architecture are proposed to address these failures.

- The cost model prioritizes front-loaded human effort for evaluation.

Continue reading the original article